Network edge workload, traffic growth to impact on data center design

The growth in sales of connected devices is good news for some companies, but datacenter providers are looking at the resulting changes in internet traffic patterns and seeing a number of potential headaches – and opportunities. The end result will be a shift towards the development of larger numbers of “right-sized” datacenters; the challenge in the near term is in building a new equation between location, energy, and connectivity for facilities that are being driven by changing demands in consumer and enterprise workloads.

Moving traffic in and out of the data center

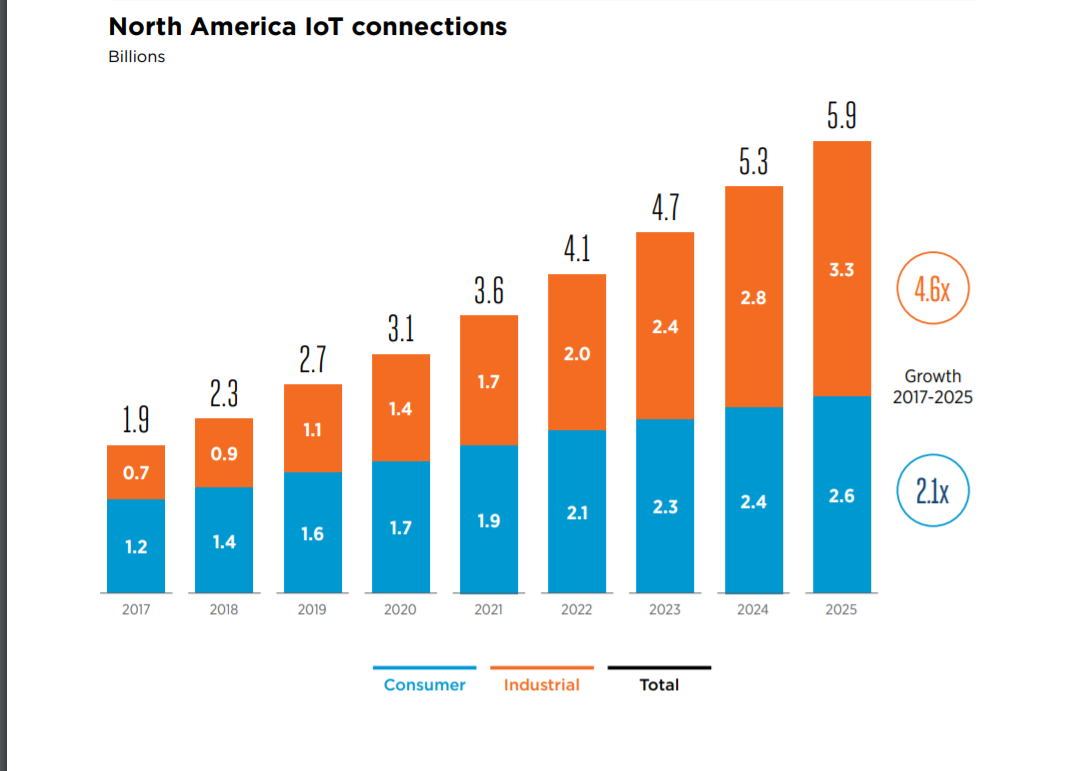

There are astonishing forecasts regarding the sheer number of consumer and industrial devices that are being sold and installed. The GSMA trade group, for example, forecasts 5.9 billion IoT devices connected to the internet (by both cellular and non-cellular wireless connections).

Number of IoT connections in North America from 2017 to 2025 (in billions)

Source: GSMA Intelligence, 2018

While the dispersion of devices far afield of key data center markets is important, we can’t overlook the impact of data traffic. The amount of traffic on data center networks is relevant, but the traffic patterns and underlying compute and storage workloads are not homogenous, and also are going to have an impact on data center design.

How fast will bandwidth consumption grow in datacenters? Drilling down into Cisco’s Visual Network Index (2017-2022) figures, we see estimates that:

• The average smartphone will generate 11GB of mobile data traffic per month by 2022, up from 2GB per month in 2017.

• By 2022, video will account for 79% of mobile traffic on a global basis, compared to 59% in 2017.

Meanwhile, industrial IoT traffic will also grow rapidly:

• M2M traffic will reach 1.7 exabytes per month, a CAGR of 52% from 2017 to 2022

The impact of video on datacenters

The need to process data from increasingly diverse and mobile devices and users is already changing the equation between location, power consumption, and connectivity that has defined most multi-tenant data center designs to date. The varying requirements of different workloads are also going to impact data center design.

For video-centric services, there are workloads that are compute intensive (video encoding and transcoding, and AI are being applied to processes like audio-to-text indexing (including closed captioning of video). Video libraries also require massive amounts of storage. All of these elements of a service can be handled in large, centralized data centers, but as more users access services from mobile devices, latency increases and the variability of wireless bandwidth quickly adds up to a sub-par user experience.

For several years companies like Netflix and Akamai have already been using services from data center providers like EdgeConnex to move content delivery closer to end users. For network service providers, moving content delivery deeper into their networks is also going to impact data centers. Edge Gravity (in partnership with Limelight Networks), Akamai and others are placing more equipment in smaller regional facilities. However, traffic growth will place strains on network service provider facilities, and content providers will still likely supplement delivery capabilities by using capacity at the infrastructure edge (see sidebar) from providers such as Compass Datacenters, EdgeMicro, Vapor IO and others.

It’s not clear which of these “edge” focused data center providers will achieve long term success. But this much is evident: the long-term impact of video consumption will require investment in larger numbers of “small” datacenters. And what constitutes small? In terms of capacity, initial sites for EdgeConnex were in the 2-4MW range with CDNs and Netflix as key customers, but edge datacenters near cell towers might have a range of 150 to 300 kW of power.

The impact of AL/ML on datacenters

The growth in connected devices means there’s more data that can be analyzed and used to power new services or improve business efficiency. An example: data from sensors in factories can be leveraged to make manufacturing processes more efficient, but massive amounts of

data captured at remote locations must be transmitted to a distant corporate or cloud data center for processing and analysis in a traditional cloud computing architecture.

Leveraging data centers closer to data sources can help solve the networking problem but raises the issue of dealing with the question of powering and cooling the systems that are driving business insights. Whether processing data in a core cloud or edge cloud, enterprises need to take into account the cost of power along with bandwidth cost. The driver here? Power hungry AI, ML, and other data processing workloads.

The challenge is that AI and ML workloads are going to bring the heat to facilities. Chips are already drawing in the range of 200-plus watts, and next-gen Intel Xeon chips estimated to draw as much as 330 watts. The means the case for different cooling technologies, including liquid cooling, grows ever more pertinent for computing in confined spaces. Immersion cooling is one option; another option is direct-to-chip cooling, which Google has adopted for the newest generation of its Tensor Processing units for AI/ML workload processing.

Other challenges arising from changes in datacenters outlined above:

• Operators can’t staff up small remote sites yet need them to be resilient. Managing larger numbers of datacenters needs to be aided by data science and ML algorithms to drive increasingly automated operational processes.

• Even in a core market, there isn’t always adequate grid power for data centers in regions of Europe and Asia. On-site power generation and other considerations will factor into sizing an edge datacenter-and whether it’s economically feasible at all.

All told, the impact of connected devices will shift some attention (and investment) away from hyperscale-sized datacenters and towards the development of “right-sized” datacenters. What size will they be? How many will be deployed in a given metro area? There is no single right answer, and the market is still in the early stages of testing out several different categories of solutions. Datacenter vendors need to participate in and nurture ecosystems of hardware, software, utilities, communications providers and end-user communities in order to have a viable edge datacenter market segment to participate in.

A version of this article appeared in Interglobix Magazine.

Comments