Crusoe rolls out edge zones as neocloud race shifts toward distributed AI infrastructure

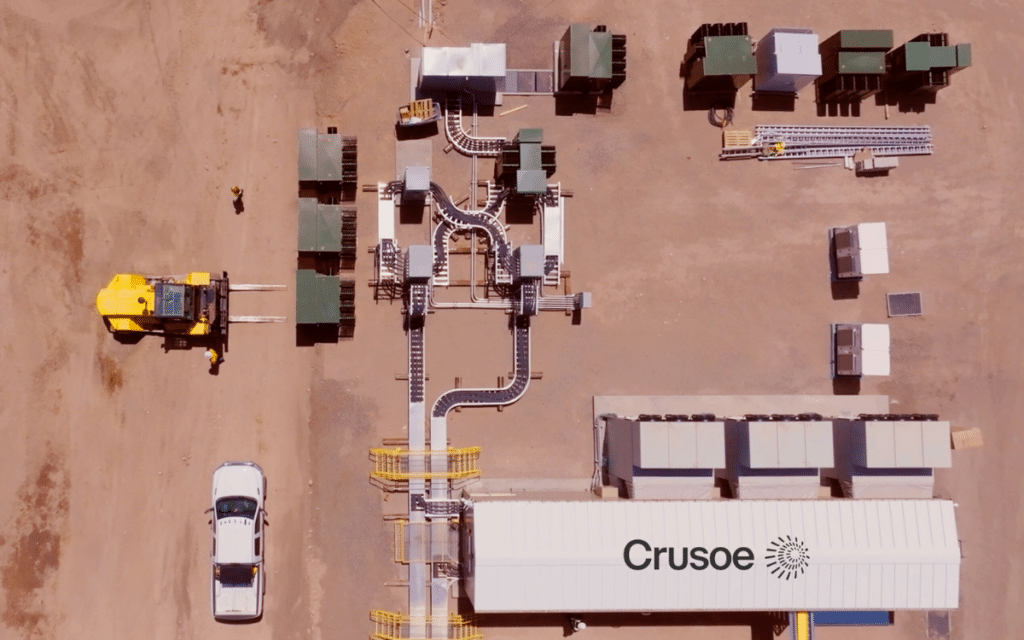

Neocloud provider Crusoe recently announced modular AI data centers that provide low-latency, sovereign and rapidly deployable AI infrastructure at the edge.

These edge zones deliver AI compute capabilities in location-specific areas based on geography, and the use cases, not supported by traditional hyperscale providers.

“Crusoe Edge Zones powered by Crusoe Spark represent the continued expansion of our vertically integrated ‘AI Factory’ vision,” says Cully Cavness, co-founder, president, and chief strategy officer of Crusoe. “By optimizing these modular AI factories to run both the Crusoe Cloud platform and our Managed Inference product, we are delivering a high-performance, distributed solution that provides the speed, sovereignty, and quality that the next generation of AI requires.”

Crusoe can quickly deploy new cloud zones, within three months in some instances, expanding cost-saving AI capacity due to its vertically integrated approach.

The main use cases are low-latency inference, dedicated enterprise clusters in multiple locations and sovereign AI deployments to serve regulated industries and governments.

Crusoe envisions large-scale campuses for model training overhead and modular distributed compute for high-performance edge delivery.

The company focuses on driving energy efficient, scalable AI infrastructure in its mission to accelerate energy and intelligence abundance.

Organizations can reach out to Crusoe for deployment opportunities if they have geographic expansion, low-latency, or sovereign infrastructure requirements.

Comments