Akamai pushes AI inference to the edge with orchestrated GPU grid across 4,400 sites

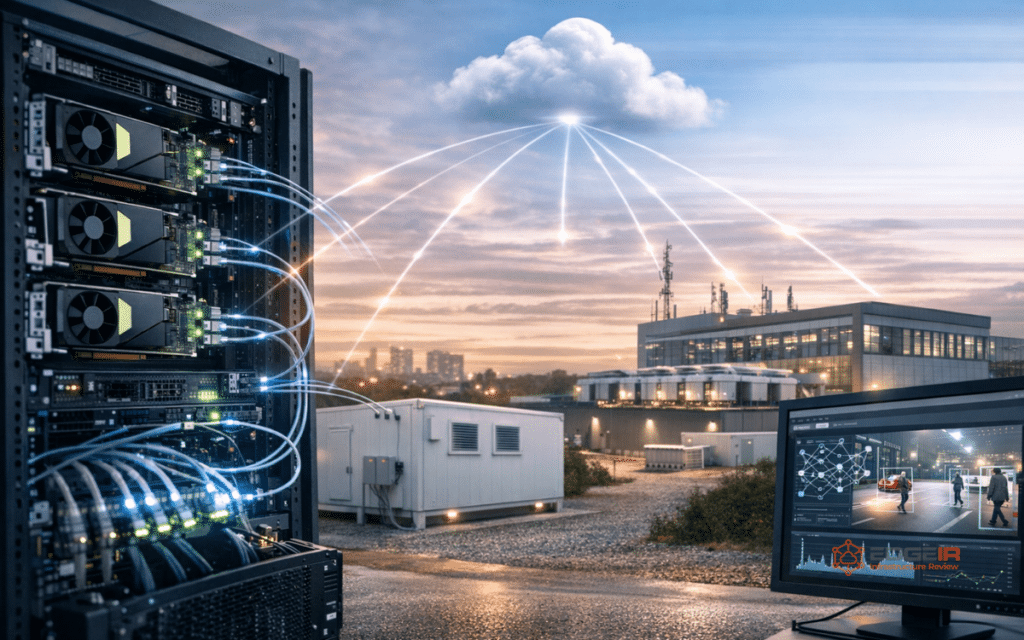

Akamai launched the Akamai Inference Cloud late last year, the first global-scale implementation of NVIDIA AI Grid, enabling distributed AI inference across 4,400 edge locations.

Akamai empowers a platform that delivers AI systems using NVIDIA AI infrastructure and optimizes workload routing with Akamai’s network to offer the best possible latency, cost, and performance.

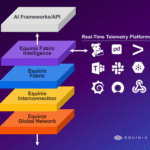

Intelligent orchestration optimizes the cost-efficiency and response time of AI applications via improved “tokenomics” in Akamai’s AI Grid, resulting in throughput gains.

“AI factories have been purpose-built for training and frontier model workloads and centralized infrastructure will continue to deliver the best tokenomics for those use cases,” says Adam Karon, COO and general manager, Cloud Technology Group, Akamai. “But real-time video, physical AI, and highly concurrent personalized experiences demand inference at the point of contact, not a round trip to a centralized cluster. Our AI Grid intelligent orchestration gives AI factories a way to scale inference outward, leveraging the same distributed architecture that revolutionized content delivery to route AI workloads across 4,400 locations, at the right cost, at the right time.”

It reduces latency by processing requests at the edge to support use cases for AI in real time, such as gaming, financial services, media, and retail.

To do so, Akamai has thousands of NVIDIA RTX PRO 6000 GPUs as part of its infrastructure with high density compute capabilities to offer enterprise scale GPU services for large-scale AI workloads and multi-modal inference.

The platform empowers enterprises to deploy adaptive, context-aware AI agents in both centralized and distributed architectures through this model.

Early adoption is evident across the gaming, finance and media sectors; a recent $200 million service agreement announced last month by Akamai validates enterprise demand.

How AI-RAN paves the way to performance and profits

Comments