NVIDIA and T-Mobile push AI-RAN to turn 5G networks into distributed edge compute platforms

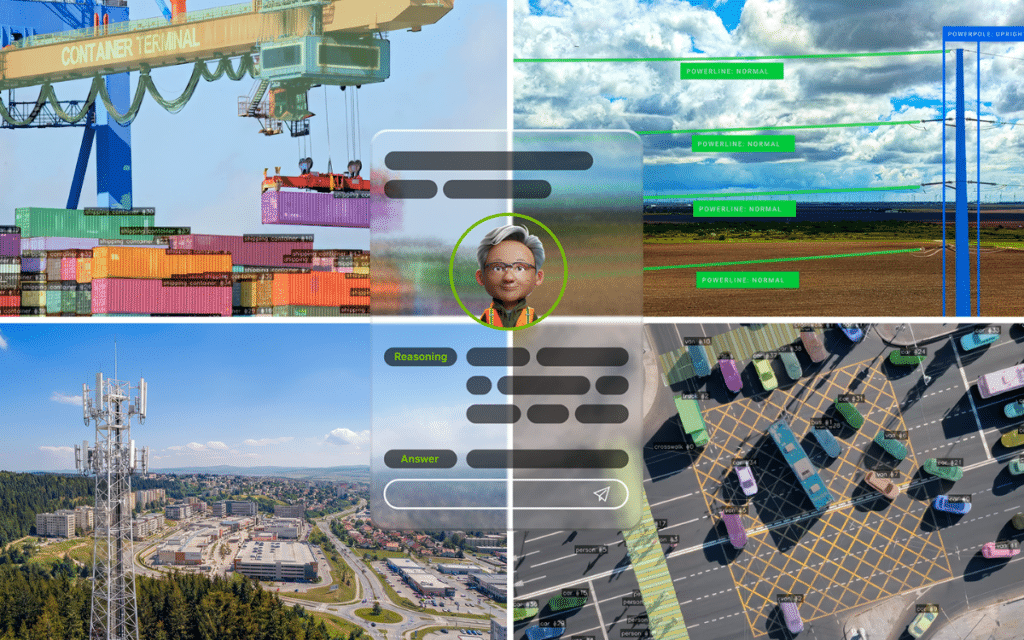

NVIDIA and T-Mobile are working with Nokia and a growing ecosystem of developers to deploy vision AI applications over distributed edge networks, using AI-RAN infrastructure and the NVIDIA Metropolis platform to turn wireless networks into platforms for edge AI computing.

T-Mobile works with NVIDIA and has started to pilot AI-RAN infrastructure to enable edge AI workloads distributed across the network while maintaining robust 5G connectivity.

NVIDIA Metropolis VSS 3 Blueprint accelerates reasoning video analytics through modular architecture, multimodal understanding and agentic search capabilities.

“Turning networks into distributed AI computing platforms to unlock the full potential of physical AI will require ultra-low latency and space time coherency at the network edge for billions of endpoints, and that’s what we’ve built at T-Mobile,” says Srini Gopalan, chief executive officer of T-Mobile. “With the first nationwide 5G Standalone and 5G Advanced network, we are uniquely positioned to help power a future where intelligent systems don’t wait on the cloud but rely on intelligent networks that allow them to act in real time.”

Some of the use cases are smart city operations, automated inspections for utilities, vision-based facility management and real-time industrial safety.

T-Mobile’s full 5G standalone radio access network (RAN) enables low-latency, secure and wide-area connectivity, a requirement to scale physical AI applications.

Leveraging NVIDIA’s infrastructure, developers such as Siemens Energy, Levatas and Fogsphere are building AI agents capable of real-time action and predictive maintenance.

Together, this collaboration aims to turn 5G networks into distributed AI platforms that enable billions of interacting devices in real time.

T-Mobile also recently tapped Red Hat OpenShift to streamline edge and 5G cloud operations.

Article Topics

AI infrastructure | edge AI | edge infrastructure | Nvidia | T-Mobile

Comments